Long Memory in Financial Time Series

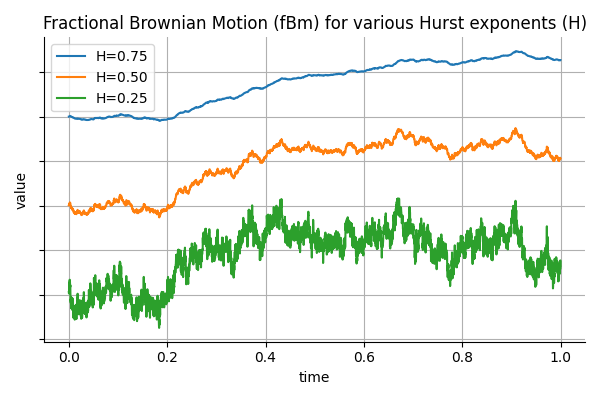

In finance, it is common to model asset prices and volatility using stochastic processes that assume independent increments, such as geometric Brownian motion. However, empirical observations suggest that many financial time series exhibit long memory or persistence. For example, volatility shocks can persist over extended periods, and high-frequency order flow often displays non-negligible autocorrelation. To capture such behavior, fractional Brownian motion (fBm) introduces a flexible framework where the memory of the process is governed by a single parameter: the Hurst exponent.

Continue reading